Seedance 2.0

Text-to-video, image-to-video, and multimodal AI video generation.

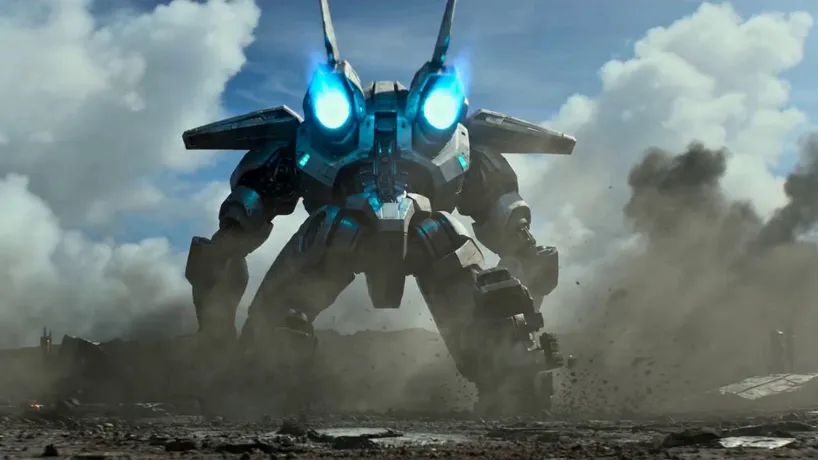

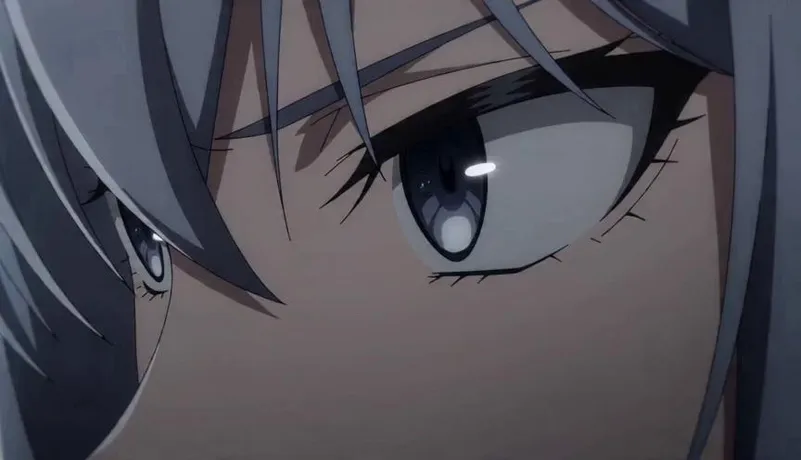

Made with Seedance 2.0

See what's possible — from cinematic VFX to anime trailers to product ads. Hover to preview.

How It Works

Choose Your Mode

Pick from three generation modes: Text-to-Video for prompt-only creation, Image-to-Video with first/last frame anchors, or Multimodal to combine images, videos, and audio clips as references using @labels in your prompt.

Configure & Prompt

Select Fast for rapid iteration or Pro for polished output. Set resolution, aspect ratio, and duration (4-15s). Enable Web Search for real-world visual grounding or Audio for synchronized sound generation. Write your scene description and hit Generate.

Generate & Download

Your video is generated in approximately 30-40 seconds. Preview it directly in the browser with embedded audio, then download the MP4 — ready to post, edit, or chain into longer sequences using the return-last-frame option.

What Is Seedance 2.0?

Seedance 2.0 is ByteDance's most advanced AI video generation model, released in early 2026. It currently holds the #1 position on the Artificial Analysis Video Arena leaderboard for both text-to-video (Elo 1,273) and image-to-video (Elo 1,356), surpassing Kling 3.0, Google Veo 3, OpenAI Sora 2, and Runway Gen-4.5.

The model introduces true quad-modal input — accepting text, images, video clips, and audio files simultaneously. This means you can provide a face photo, a motion reference video, and a voice clip in a single generation, and the model will synthesize them into a coherent video. The @binding system lets you link specific text tokens in your prompt to specific uploaded assets, giving precise control over which reference governs which part of the output.

Seedance 2.0 generates audio and video jointly in a single forward pass, producing temporally aligned dialogue, ambient soundscapes, sound effects, and music. Its improved physics simulation handles collisions with realistic weight, fabric dynamics, and natural character motion even in high-action sequences. Combined with web search grounding for real-world visual reference, it delivers the most versatile and highest-quality AI video generation available today.

Key Features

The most capable AI video model, ranked #1 globally across text-to-video and image-to-video benchmarks.

Quad-Modal Input

Combine text, images, video clips, and audio files in a single generation. Upload up to 9 images, 3 videos, and 3 audio clips as references, and use @labels to bind them to specific parts of your prompt.

Native Audio Co-Generation

Audio and video generated jointly in a single forward pass — not stitched after the fact. Dialogue, ambient soundscapes, sound effects, and music are temporally aligned with the visuals from the ground up.

Web Search Grounding

Enable web search to let the model pull real-world visual references from the internet. Generates more accurate content for specific people, places, brands, and visual styles by grounding in actual imagery.

Fast & Quality Tiers

Fast mode for rapid iteration and preview — check layouts, timing, and composition at lower cost. Quality (Pro) mode for maximum visual fidelity with stable textures, detailed faces, and polished final output.

Advanced Physics Simulation

Realistic collisions with weight, fabric tearing and draping, fluid dynamics, and natural character motion in high-action sequences. A major leap over previous models in physical plausibility.

Flexible Duration Control

Generate videos from 4 to 15 seconds with fine-grained control. Chain clips using the return-last-frame option to build longer sequences with consistent visual continuity across shots.

Technical Specifications

A detailed look at what Seedance 2.0 delivers under the hood.

| Specification | Details |

|---|---|

| Developer | ByteDance Seed Team |

| Architecture | Dual-Branch Diffusion Transformer with sparse architecture |

| Leaderboard Rank | #1 T2V (Elo 1,273) · #1 I2V (Elo 1,356) on Artificial Analysis |

| Max Resolution | 720p |

| Clip Duration | 4–15 seconds (flexible) |

| Aspect Ratios | 16:9, 9:16, 1:1, 4:3, 3:4, 21:9 |

| Input Modalities | Text + up to 9 images, 3 videos, 3 audio files |

| Generation Modes | Text-to-video, Image-to-video (first/last frame), Multimodal reference |

| Audio | Native audio-visual co-generation (stereo) |

| Speed Tiers | Fast (rapid iteration) · Quality / Pro (maximum fidelity) |

| Generation Speed | ~30–40 seconds per clip at 720p |

| Web Search | Optional real-world visual grounding via web search |

| Output Format | MP4 (H.264) with AAC audio, 24 fps |

Who Uses Seedance 2.0?

From solo creators to enterprise teams, Seedance 2.0 powers the most demanding video workflows.

Advertising & E-Commerce

Turn product photos into narrative demo videos with multimodal references. Upload a product image, a motion style video, and background music — generate polished ad creatives in under a minute. Batch ad variations with locked brand consistency.

Music Videos & Audio-Visual

Upload audio tracks as references and generate rhythm-matched visuals. The native audio co-generation ensures sound effects and ambient audio are perfectly synchronized with the visual narrative and pacing.

Social Media at Scale

Use Fast mode for rapid iteration and previews, then switch to Pro for final output. Native 9:16 support, flexible durations, and quick generation make it easy to maintain a high-volume posting schedule across platforms.

Short Films & Storytelling

Create multi-shot narratives with consistent characters using the return-last-frame option to chain clips. Combine director-level camera control with multimodal references for cinematic sequences that feel professionally directed.

Education & Training

Generate video lessons from scripts and reference materials. The multimodal input lets you combine diagrams, demonstration clips, and narration audio into structured educational content with synchronized visuals and sound.

Brand & Style Transfer

Enable Web Search to ground generation in real-world visual references, or upload style reference videos and images. Maintain brand-specific aesthetics across all generated content without manual editing or post-production.

Seedance 2.0 vs Competitors

See how Seedance 2.0 stacks up against other leading AI video models.

| Feature | Seedance 2.0 | Sora 2 | Kling 3.0 | Runway Gen-4.5 |

|---|---|---|---|---|

| Arena Rank (T2V) | #1 | #4 | #2 | #5 |

| Multimodal Input | Quad-modal (text+img+video+audio) | Text + Image | Text + Image | Text + Image |

| Native Audio | Joint co-generation | Post-hoc | Post-hoc | No |

| Web Search | Yes | No | No | No |

| Speed Tiers | Fast + Pro | Single tier | Single tier | Turbo + Standard |

| Max Duration | 15 seconds | 20 seconds | 10 seconds | 10 seconds |

Frequently Asked Questions

Everything you need to know about Seedance 2.0.

Create Stunning Videos

with Seedance 2.0

The highest-ranked AI video generator with quad-modal input, native audio, web search grounding, and Fast/Pro tiers. No video editing experience required.

Free credits for new users. No credit card required.